OVERVIEW

Salesforce Education Cloud integrates AI to support students across the academic lifecycle. While AI tools offer efficiency and personalization, they also raise critical concerns around data privacy, transparency, and trust, especially in higher education environments where tool usage can feel mandatory.

This project explored how students perceive AI-powered education tools and how Salesforce might design AI experiences that help students feel informed, secure, and in control of their data.

PROBLEM

As AI becomes embedded in education platforms, students increasingly rely on these tools for studying, writing, coding, and planning coursework. However, many students lack clarity around:

What data is being collected

How long it is stored

Who has access to it

Whether they have meaningful choice or control

This lack of transparency places students in a passive position, where they feel obligated to accept AI systems without fully understanding their implications.

How might we ensure that university students feel informed, secure, and in control when AI-powered education tools collect and use their data?

RESEARCH APPROACH

We conducted qualitative interviews to capture perspectives across multiple roles within higher education, including:

A subject-matter expert

Faculty members

Undergraduate students

Graduate students

Alumni

Interviews focused on:

How students currently use AI tools

Awareness of AI data practices

Trust and mistrust in AI systems

Expectations around transparency and consent

All interviews were anonymized and synthesized collaboratively.

SYNTHESIS & ANALYSIS

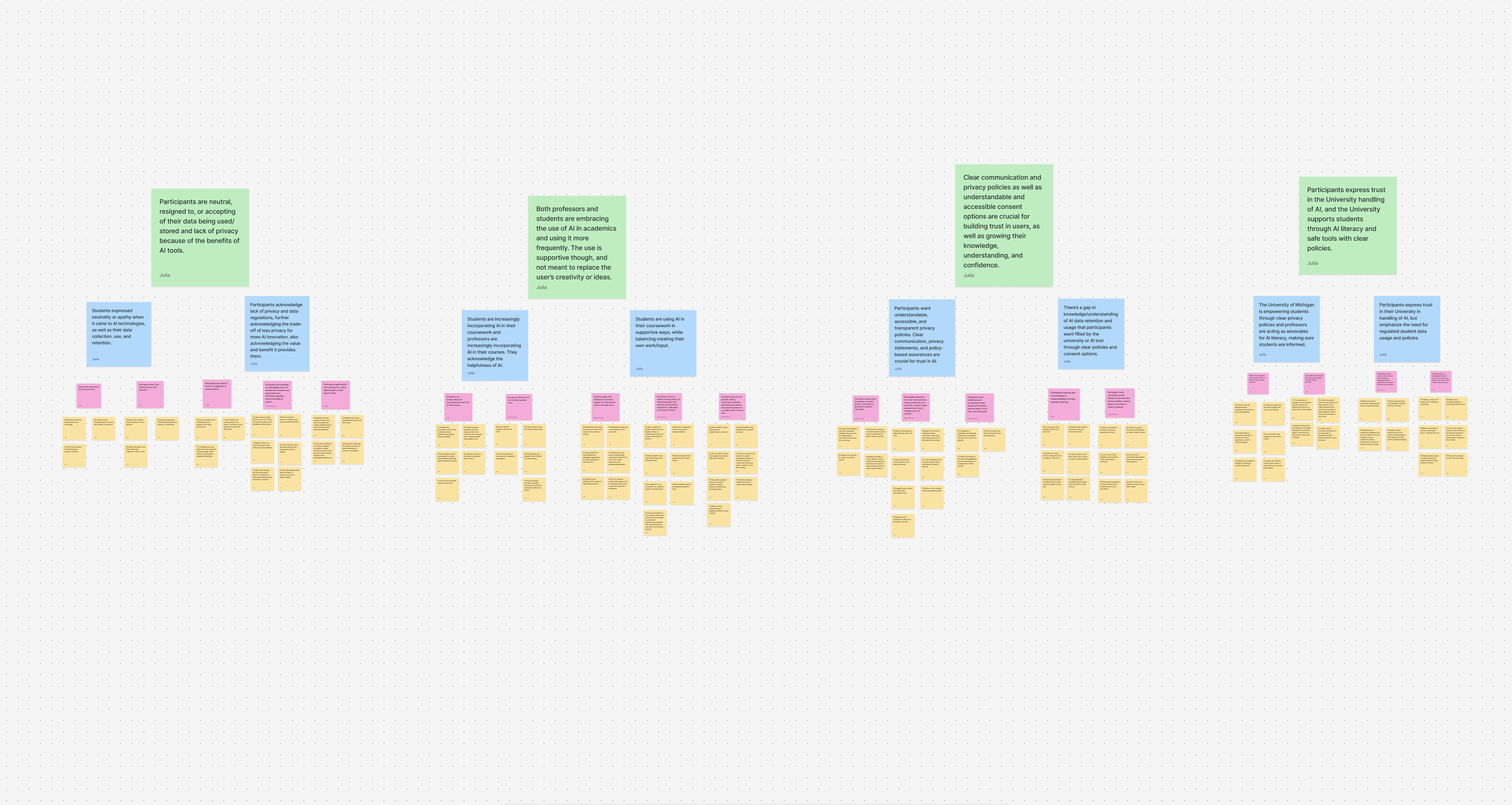

After interviews, our team translated qualitative data into themes using affinity mapping. We clustered observations related to emotions, behaviors, concerns, and needs to identify systemic patterns across participants.

KEY INSIGHTS

1. Privacy resignation is widespread

Students generally understand that AI tools collect data, but many feel they have no real choice.

Privacy policies are seen as unavoidable barriers rather than meaningful consent mechanisms. Students often click “agree” to complete required academic tasks, even when uncomfortable.

2. Students use AI as support

Students actively use AI to summarize readings, clarify difficult concept and proofread or refine work.

However, they consistently emphasized that creative ownership remains human-driven. AI is seen as a tool for reducing tedious work, not replacing student agency.

3. Mistrust stems from lack of transparency

Students expressed mistrust due to inaccurate or fabricated AI outputs, a lack of visibility into how AI decisions are made and fear of institutional surveillance

Even when institutional safeguards existed (e.g., data retention limits), students were often unaware of these protections.

RECCOMENDATIONS

1. Increase AI literacy through accessible education

Create student-facing learning materials (micro-lessons, onboarding modules) that explain:

What data AI tools collect

Why data is used

What “model training” means

Data retention and protections

Impact: Reduces anxiety, corrects misconceptions, and enables informed decision-making.

2. Provide meaningful student control and consent

Introduce a student-facing consent dashboard within Education Cloud that allows users to:

See what data is collected

Opt in/out of nonessential uses

Adjust consent over time

View logs of data access

Impact: Shifts students from passive acceptance to active participation.

3. Embed transparency directly into workflows

Surface real-time, plain-language explanations during AI interactions (e.g., tooltips, icons, short prompts) that explain:

What the AI is doing

What data it uses

Why it behaves a certain way

Impact: Reduces the “black box” effect and builds trust through clarity.

OUTCOME

Our research and recommendations were presented to Salesforce partners and aligned with their concerns around student trust and ethical AI adoption. The findings provided Salesforce with actionable, research-backed guidance for designing AI education tools that prioritize transparency, consent, and student agency.

REFLECTION

This project reframed my understanding of UX research beyond usability and interfaces, emphasizing ethics, power, and accountability in system design. Working with an enterprise partner reinforced the importance of translating abstract ethical concerns into concrete, feasible design guidance.

The experience strengthened my interest in ethical UX, data privacy, and trust-centered design, particularly within education and healthcare contexts.